This is the last of three posts on the ISMAR09 experiential learning workshop.

Post one and

post two covered the morning presentations on current applications, while this one will attempt to capture the excellent group discussion that took place in the afternoon.

The afternoon's format was to look at three main questions about education and augmented reality, each one building on the last. For each question, we broke ourselves into three groups, discussed the topic for 15 minutes (or more, in most cases), and then shared our thoughts with the group. My notes below will consist of our own group's findings first, which will naturally have more detail. Points from the others groups will follow - members of these groups are most definitely invited to add more insight or links to their own blog posts in the comments.

What are the Key Elements of Mixed and Augmented Reality that Create a Meaningful Experience?I got this one started by explaining something I tell my friends and family when they want to know about augmented reality. I feel that one of the big benefits of AR is that you essentially reduce the number of levels of indirection required to do something. For example, consider a traditional map. You have a bird's eye, (usually) non-photorealistic view of the world that you must rotate and project onto the real world in front of you. What if that information was augmented for you in the first place? You can free up all that cognitive power for the actual task at hand (such as learning).

Another key element suggested was the idea that augmented reality should not provide the entire story - the imagination should have the ability to work its magic, too. You should also be able to bring in other senses beyond vision, making the presence of the physical world so important. Having an EyePet in a completely virtual world is somehow different than playing with it in your living room - in the latter case, the broader context of your own culture is included in the gameplay.

Augmented reality allows non-experts to participate in and understand tasks outside their field. For example, it seems unlikely that Disney could have succeeded in getting permission to build Disney World here in Orlando today. But if the city council (or whoever needed to vote) were able to see with their own eyes exactly how it would all look, and how, say, emergency evacuations would work, things might be different.

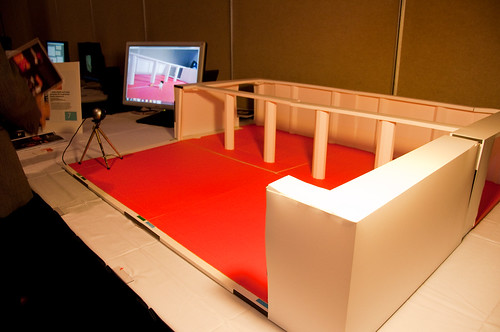

Our group also believed that the most meaningful experiences would come from free-range AR, where much larger environments can become immersive sandboxes for learning. This setup could also lead to a more social experience.

Another key point was on the adaptability of software. Ideally, AR programs would learn you as you learned them. Of course, this requires much more advanced artificial intelligence than what is available today, but we do get better all the time in mimicking this ability.

Finally, we decided that AR would be most meaningful when it was personalized. This refers to not just the changing viewpoint of the virtual objects, but also the content of the virtual portion of the environment itself. This, among other things, will help avoid information overload.

Points from other groups:- AR needs to be consistent with what's expected in the real world (it has to "make sense").

- There must be an element of surprise and magic.

- It should be social, approachable, and easy to use.

- Users should enjoy being tricked/surprised.

- The end user experience is key (not the technology itself).

- There should be some degree of being novel or special.

- It should be scalable in terms of time, space, size, and orientation.

- It will provide the ability to experiment where it was once impossible.

- It must be reliable enough to reflect realism.

How Do We Continue the Learning Experience Once the User LeavesThe first example our group discussed was the idea of capturing information about the experience that can then be used later in various ways. For instance, a military training exercise might record the decisions made for a particular scenario, and the user can bring that home and show his or her family what they experienced. They can compare their stats to others who have done the same scenario, and so on. The question then becomes: what is the best way to present the data? Whatever it is, it shouldn't replace the original experience. Otherwise, there's no reason to use the augmented reality again (or, for instance, no reason to go to a museum again).

An interesting discussion started about whether doing a good enough job in creating the experience is enough to spark interest in a certain topic such that the user will go home and learn more about it. The example of the Louvre was that most visitors look at art for only 30 seconds or so, when you need at least two full minutes to fully appreciate the details. If the proper viewing was encouraged by the AR experience, perhaps this is enough to want to find out more. What if the Mona Lisa had an augmentation of da Vinci putting on the finishing touches after acting out some story related to life in that era? Would you be more inclined to find out more about da Vinci?

Finally, we felt it was key to avoid making it about the technology - the tech needs to be invisible. This way, the focus will be on the topic at hand, which again will make for an easier transition to, say, a follow up activity to be done at home.

Points from other groups:

- A museum exhibit can have a take-home piece so the adventure can be continued (for example, your own fish from the main giant fish tank exhibit). The individual experience is sparked thanks to the larger context of the exhibit.

- Make the follow-up activity viral. Share with friends and family.

- Allow learners to finish the story at home when they run out of time.

- Provide networking opportunities online.

- Create physical activities later on.

What is Novel When it Comes to Augmented Reality and Learning?We agreed that augmented reality isn't a new paradigm shift, but rather another tool in a teacher's toolkit. However, this tool might benefit a teacher in many ways. For instance, it may be easier to employ than other computer-based demonstrations if it's as easy to use as we insisted it be in the earlier questions. Furthermore, the exploratory nature makes for an environment that allows a teacher to say "I don't know, let's find out," avoiding the fear of teaching a topic they don't understand well themselves. Finally, it might be something that much better than just Googling a topic because it would certainly be more immersive.

Other advantages of AR in the classroom are that it would be more repeatable than more free-form techniques, making it possible to standardize the content (though not the experiences) of AR scenarios across the board.

It may also open up opportunities for standardized learning at home. This might help capture the attention of the gifted students and help the struggling students catch up. It would even be possible to have distributed study groups who could interact with the same virtual object.

In a training context, augmented and mixed reality has already proven to be very effective. Apparently many commercial pilots take their first flight in a real jet because the simulators are just that good.

Thinking more simply to see what could be done now, it's clear that printed material can be augmented with markers and cell phones used to view them (and kids would love getting permission to pull out their phones in class!).

Points from other groups:

- Will AR be a revolution or just an evolution? Can we truly improve learning with AR? Perhaps we won't truly know for another few decades.

- AR provides a different dimension related to creativity and self-reflection. It can be about exploration, not necessarily just making abstract concepts concrete.

- Main barrier: How will it improve peoples' lives? We just don't know - there is a lack of understanding that won't be solved until we start getting more products in peoples' hands.

- What are trying to accomplish with AR? Connection, relevance, and perspective? How?

ConclusionThat concludes the workshop on experiential learning. I will be taking away the excellent thoughts and insights from the three posts on this blog, as well as a better appreciation for the big picture. I hate to admit it, but when thinking about the game I want to build for my PhD research, I got stuck in thinking of a basic marker based interaction. There's so much more to AR that it would be tragic to miss considering it all.